In the last few years, you may have tried many things and discovered innovative ways to get things done from home, like your job, homework, connecting with dear ones, etc. Nowadays, technology has progressed a lot, but still, there are many things to improve when it comes to communicating and collaborating. However, windows 11 tips and tricks can quickly fix the issue.

We have given here many windows 11 tips and tricks, letting you know that you can make most of the updated version. These new updates can offer a simple user experience that can quickly become familiar with it.

Top Windows 11 Tips and Tricks:

Get Started:

When you enable your Windows to back up your apps, Get Started will take you to a list of the apps on your old device. It allows you to select what you prefer to load on your new device. In this case, it is better to restore all of your old version photos, docs, and files to the upgraded one rather than beginning it ultimately. These prompts enable you to select what to migrate to the computer. Hence, the Get Started app can apply the preferences and settings. In addition, it allows you to transfer apps and programs.

Start and Taskbar:

These are available in the front and center. You can make these things possible with fewer clicks and swipes. First, your task is to head towards Start and find anything. After that, a centralized search allows you to find the web and computer from one place. Next, you need to look for browsers, tabs, and folders. Finally, it lets you find new visual elements and sounds, smooth animations, new buttons, toggles, and fonts. With the help of the new layout and navigation, you can make complex things easier.

Snap Assist and Desktop Groups:

These are useful in arranging the apps on the desktop. For example, if you have already opened multiple windows, you can drag these to the edge of the screen to turn on Snap Assist. It helps snap them into an arranged grid, making most of the display space. As soon as you have completed upgrading to the new version, it will remember the way you have placed the apps, whether you use external or many displays.

While plugging the computer back in, you will get everything back into its position. Desktop Groups allow you to switch between many desktops quickly. For example, suppose one may have apps including Word, Microsoft Edge, and Teams opened, whereas another may have PowerPoint, OneNote, and a music player. The new version has four standard pre-configured layouts and two extra ones for displays. In addition, it offers effective screen resolutions of 1920×1080 or higher.

Widgets:

Do you want to find things quickly that matter to you most, like to-do lists, upcoming meetings, and news? In this case, you will need one place to get things done quickly. Now, you can enjoy the Widgets in the new version’s Taskbar. Your job is to tap or swipe from the display’s left side first. Then, instead of looking for it in separate apps, tabs, and pages, it lets you see the content you curate. In addition, you can get personalized content, including reminders, stocks, sports scores, social media, and local weather. If necessary, you have to take the help of the Interests page under Manage Interests to find topics and publishers.

Microsoft Store:

The Microsoft Store is now available with a new design. It also offers different apps, shows, and movies, from casual gaming to professional editing. In addition, windows and developers combined work to provide you with more content. This new Microsoft Store features tools including Preview and Search that assist in searching for what you want.

With the help of these tools, including Dark Web monitoring, automatic price comparisons, and vertical tabs, it becomes easier to stay safe online and save money while shopping. Besides, these help to keep you organized and focused. In addition, Microsoft Edge lets you know if your password-protected on the browser matches with those available in the list of leaked credentials. After that, it will prompt you to update your password. In this case, Password Monitor helps to scan for matches. For example, you have joined a bank recently and set up your online account, and it allows you to create a secure password to keep the account secured.

While checking out, Microsoft Edge helps save more money by applying coupons to the order. In addition, using the Sleeping tabs, you can keep the focus on current projects.

Chat Support from Microsoft Teams:

It is possible to attach the computer with any of your contacts. You can chat and do audio & video calling from iOS, Android, PC, or Mac. It enables you to stay on the call for up to a day. Therefore, you do not even need to drop and dial back in. It is one of the great windows 11 tips and tricks.

Touch, Voice, and Pen Inputs:

The digital pen, touchscreen, and voice typing feature help work more quickly than previously. For example, you can record your voice with your mobile and playback it to transcribe your words into text. In addition, your computer may have a microphone that can process your speech immediately using voice typing – text transcription.

In addition, it lets you detect inflection and rhythm for adding essential punctuation. The new version makes writing or drawing possible. Besides, it allows you to annotate PDFs. Moreover, it enables you to take the help of a digital pen to make the most of both worlds. It is possible to personalize the new Pen menu with favorite apps for quick access.

Conclusion:

Smartphones have become very handy nowadays for their touchscreens. But taking notes is not so simple. If the new version PC has a touchscreen, you can take notes instantly with a digital pen. In addition, the windows 11 tips and tricks allow you to use natural gestures like multi-finger gestures to navigate quickly.

Frequently Asked Questions:

- What cool things can it do?

These are a few hidden features of the updated version:

- Multitasking features.

- Background apps permission.

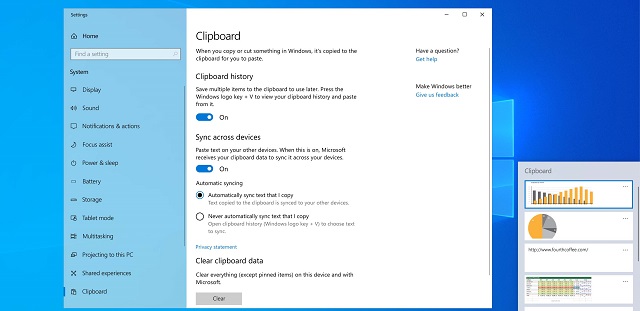

- Clipboard history features.

- Better security.

- Manage volume for individual apps opened on a desktop.

- Does it improve performance?

Compared to the earlier version, it can hold some potential to improve the PC speed.

- What does it do differently?

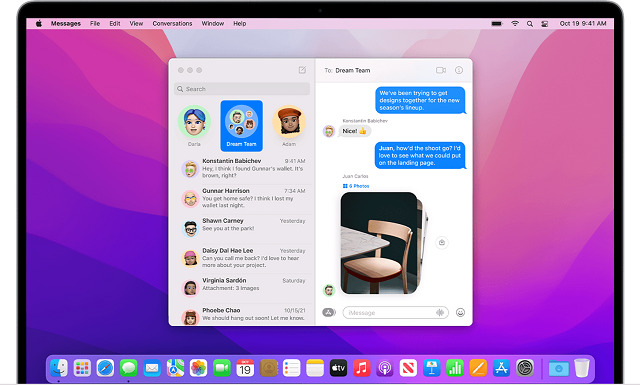

It comes with a new design containing a centered Start menu and Taskbar. Besides, it brings a Mac-like interface to the OS. In addition, it comes in a clean design with rounded corners and pastel shades.

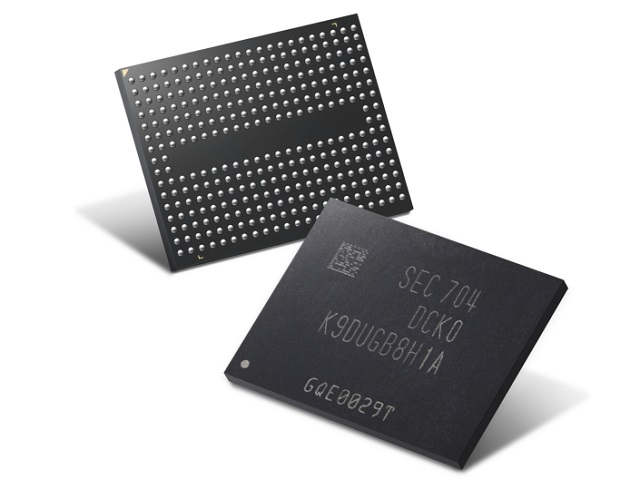

It comes with inbuilt solid-state chips. In addition, each chip has an array of flash memory cells.

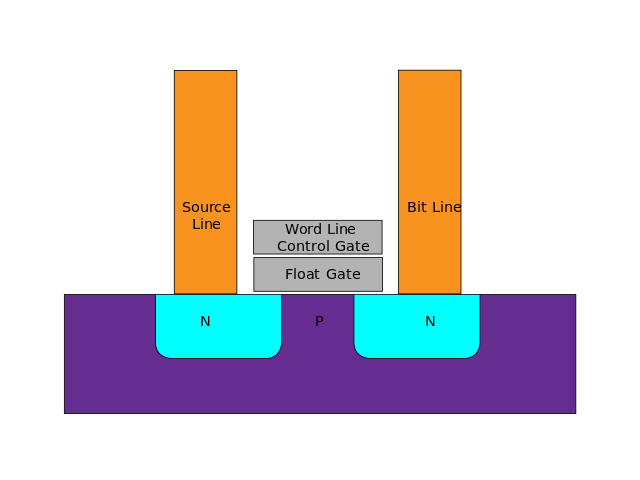

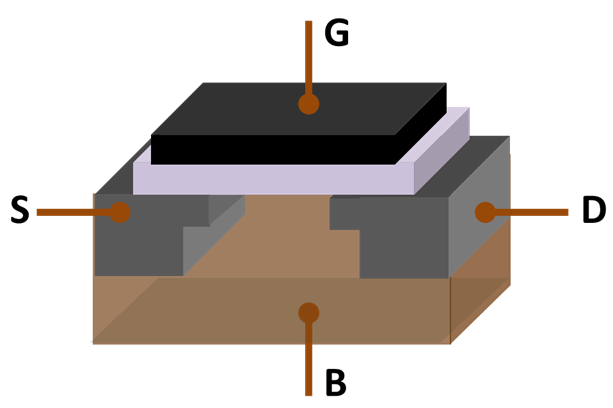

It comes with inbuilt solid-state chips. In addition, each chip has an array of flash memory cells. In flash memory, each cell resembles MOSFET (metal-oxide-semiconductor field-effect transistor). However, it varies with the MOSFET transistor gate. MOSFET has only one gate, whereas the other has two gates. Each cell works as an electrical switch in which current flows between source and drain. Further, it is controlled by a floating gate and a control gate. The control gate functions similar to the gate in the MOS transistor. But below the control gate, a floating gate is placed. The floating gate is insulated entirely using an oxide layer. The floating gate is placed between the control gate and the MOSFET channel.

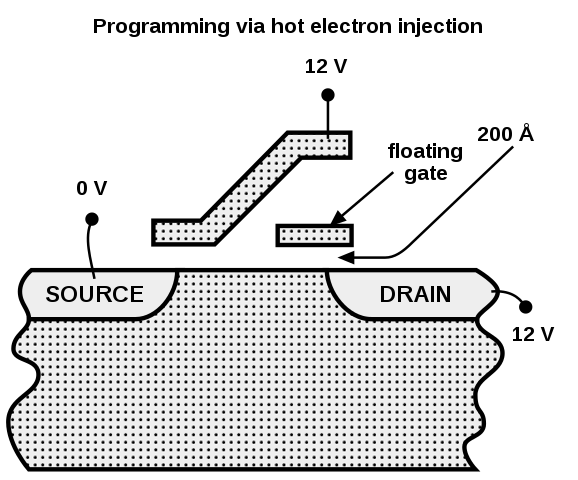

In flash memory, each cell resembles MOSFET (metal-oxide-semiconductor field-effect transistor). However, it varies with the MOSFET transistor gate. MOSFET has only one gate, whereas the other has two gates. Each cell works as an electrical switch in which current flows between source and drain. Further, it is controlled by a floating gate and a control gate. The control gate functions similar to the gate in the MOS transistor. But below the control gate, a floating gate is placed. The floating gate is insulated entirely using an oxide layer. The floating gate is placed between the control gate and the MOSFET channel. Each cell in the NOR Flash’s one end is directly grounded, and the other end is connected directly to the bit line. This arrangement behaves as the NOR gate. While one of the word lines connected to the control gate is brought high, the relative storage transistor will pull the output bit line low. For embedded applications that require distinct non-volatile memory, the NOR Flash suits best. Their low read latencies allow both direct code execution and data storage in single memory products.

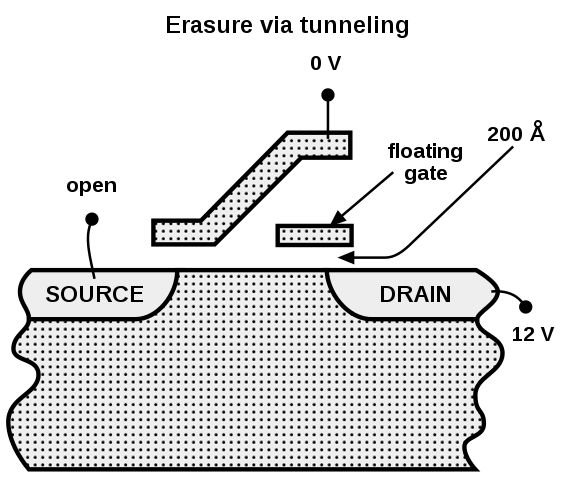

Each cell in the NOR Flash’s one end is directly grounded, and the other end is connected directly to the bit line. This arrangement behaves as the NOR gate. While one of the word lines connected to the control gate is brought high, the relative storage transistor will pull the output bit line low. For embedded applications that require distinct non-volatile memory, the NOR Flash suits best. Their low read latencies allow both direct code execution and data storage in single memory products. A high reverse polarity voltage is applied between the control gate and source terminal to erase the NOR-Flash cell and pulls the electrons off the floating gate through quantum tunneling. The latest model NOR chips are divided into multiple erase segments called blocks or sectors. The erasing operation can be done block-wise. Moreover, all the cells in the erase segment will be erased altogether. The Programming of NOR cells is done one word or byte at a time.

A high reverse polarity voltage is applied between the control gate and source terminal to erase the NOR-Flash cell and pulls the electrons off the floating gate through quantum tunneling. The latest model NOR chips are divided into multiple erase segments called blocks or sectors. The erasing operation can be done block-wise. Moreover, all the cells in the erase segment will be erased altogether. The Programming of NOR cells is done one word or byte at a time.

How to Enable the Clipboard App:

How to Enable the Clipboard App:

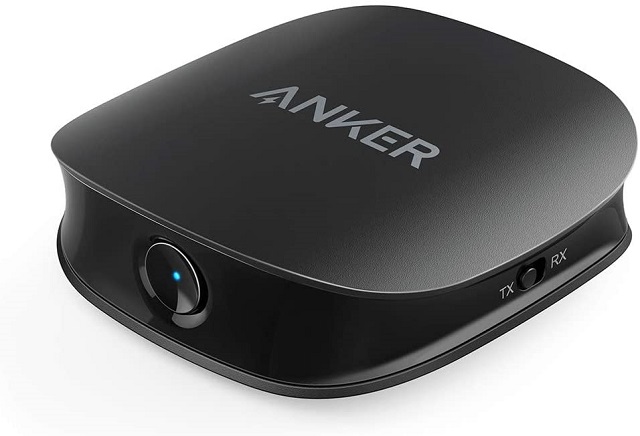

Description: The product is aptX Low Latency certified. You can get the ultimate “no lip-sync delay” experience while using the device with a speaker or headphone supporting aptX Low Latency or FastStream. Thus, it makes you sure that you will get perfectly synced visual audio for every content you will watch on display.

Description: The product is aptX Low Latency certified. You can get the ultimate “no lip-sync delay” experience while using the device with a speaker or headphone supporting aptX Low Latency or FastStream. Thus, it makes you sure that you will get perfectly synced visual audio for every content you will watch on display. Description: The product comes with the recent Bluetooth 5.0 (CSR8675) version. Besides, it is compatible with Qualcomm aptX HD, aptX Low Latency, aptX, and AAC decoding. If you find the device supporting both codecs, you can switch them to alternate between HD and low latency.

Description: The product comes with the recent Bluetooth 5.0 (CSR8675) version. Besides, it is compatible with Qualcomm aptX HD, aptX Low Latency, aptX, and AAC decoding. If you find the device supporting both codecs, you can switch them to alternate between HD and low latency. Description: The product comes with a 2-in-1 Design that means a transmitter and receiver in one body. As a result, the model can support Bluetooth functions.

Description: The product comes with a 2-in-1 Design that means a transmitter and receiver in one body. As a result, the model can support Bluetooth functions. Description: Along with a premium CSR8670 chipset, the model is also compatible with the latest Bluetooth V5.0. Besides, it features aptX and aptX Low Latency applicable to both the TX and RX modes. The aptX enables you to enjoy CD-quality sound wirelessly over BT connections with TV, phones, tablets, and other aptX-source devices. Besides, the aptX Low Latency allows you to watch a video and hear audio wirelessly at a time in a synchronized fashion. Moreover, you will not experience any audio delay in this case.

Description: Along with a premium CSR8670 chipset, the model is also compatible with the latest Bluetooth V5.0. Besides, it features aptX and aptX Low Latency applicable to both the TX and RX modes. The aptX enables you to enjoy CD-quality sound wirelessly over BT connections with TV, phones, tablets, and other aptX-source devices. Besides, the aptX Low Latency allows you to watch a video and hear audio wirelessly at a time in a synchronized fashion. Moreover, you will not experience any audio delay in this case. Description: The product allows you to relax on your couch and watch TV with headphones, and it will not disturb any person staying in the room. The model enables you to stream music from phone or tablet to speakers easily.

Description: The product allows you to relax on your couch and watch TV with headphones, and it will not disturb any person staying in the room. The model enables you to stream music from phone or tablet to speakers easily.

Multiple mobiles are there supporting the ping functionality. But mobiles released currently don’t come with the feature enabled. Therefore, if you are willing to ping the devices, you will require specialized apps on the mobile. PingD, Google Find My Phone, etc., are a few examples helping to return the ping request. Therefore, you should ensure that you have the apps installed and configured on the system before you proceed. Besides, remember that you cannot ping a mobile that is turned off. However, having a firewall installed on the system does not allow other devices to ping the mobile. Besides, if the AP Isolation feature is turned on the router, other devices may not work.

Multiple mobiles are there supporting the ping functionality. But mobiles released currently don’t come with the feature enabled. Therefore, if you are willing to ping the devices, you will require specialized apps on the mobile. PingD, Google Find My Phone, etc., are a few examples helping to return the ping request. Therefore, you should ensure that you have the apps installed and configured on the system before you proceed. Besides, remember that you cannot ping a mobile that is turned off. However, having a firewall installed on the system does not allow other devices to ping the mobile. Besides, if the AP Isolation feature is turned on the router, other devices may not work.

Description: SanDisk offers its products in 32 GB, 64 GB, 128 GB, 256 GB, 512 GB or 1 TB storage options. This product has a read speed of a maximum of 170MB per second, while the write speed is a maximum of 90 MB per second. It helps shoot 4K videos and store them using the digital camera.

Description: SanDisk offers its products in 32 GB, 64 GB, 128 GB, 256 GB, 512 GB or 1 TB storage options. This product has a read speed of a maximum of 170MB per second, while the write speed is a maximum of 90 MB per second. It helps shoot 4K videos and store them using the digital camera. Description: It is best for Nintendo Switch. Generally, it has 32GB or 64GB of internal storage that relies on the model. So whether you are willing to store plenty of downloaded games and related data within a handheld console, you can go with this one.

Description: It is best for Nintendo Switch. Generally, it has 32GB or 64GB of internal storage that relies on the model. So whether you are willing to store plenty of downloaded games and related data within a handheld console, you can go with this one. Description: Are you using two cameras or devices— one is compatible with an SD card, whereas the other one is with a microSD card? Then, you should use this with an adapter. The product is equipped with an SD adapter and a MobileLite Plus USB card reader. This USB reader can work as a discounted bundle.

Description: Are you using two cameras or devices— one is compatible with an SD card, whereas the other one is with a microSD card? Then, you should use this with an adapter. The product is equipped with an SD adapter and a MobileLite Plus USB card reader. This USB reader can work as a discounted bundle. Description: Do you want to have huge storage from a high-end flash memory card? Then, the product is ideal for use. It is slightly on the costlier side, but the features justify everything.

Description: Do you want to have huge storage from a high-end flash memory card? Then, the product is ideal for use. It is slightly on the costlier side, but the features justify everything.